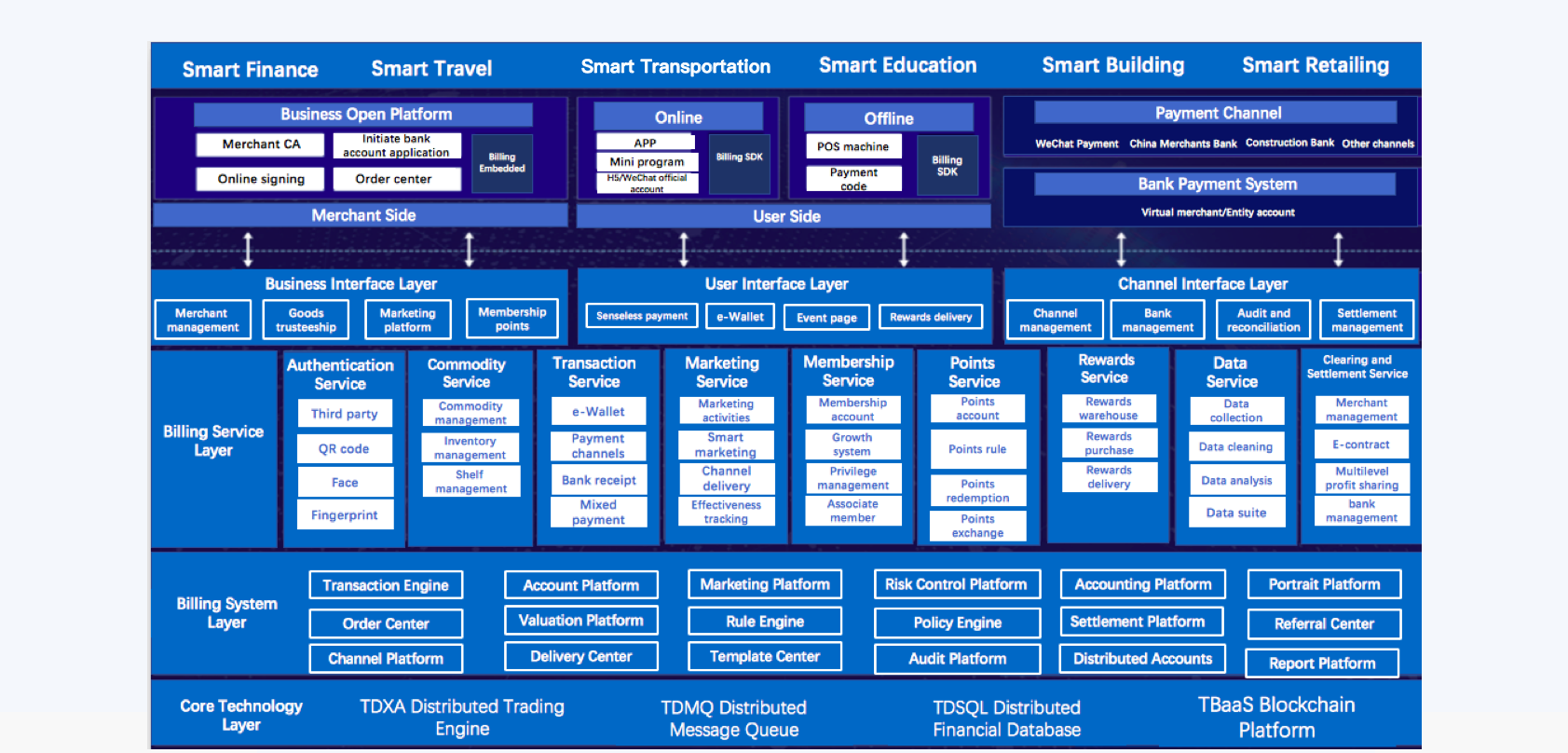

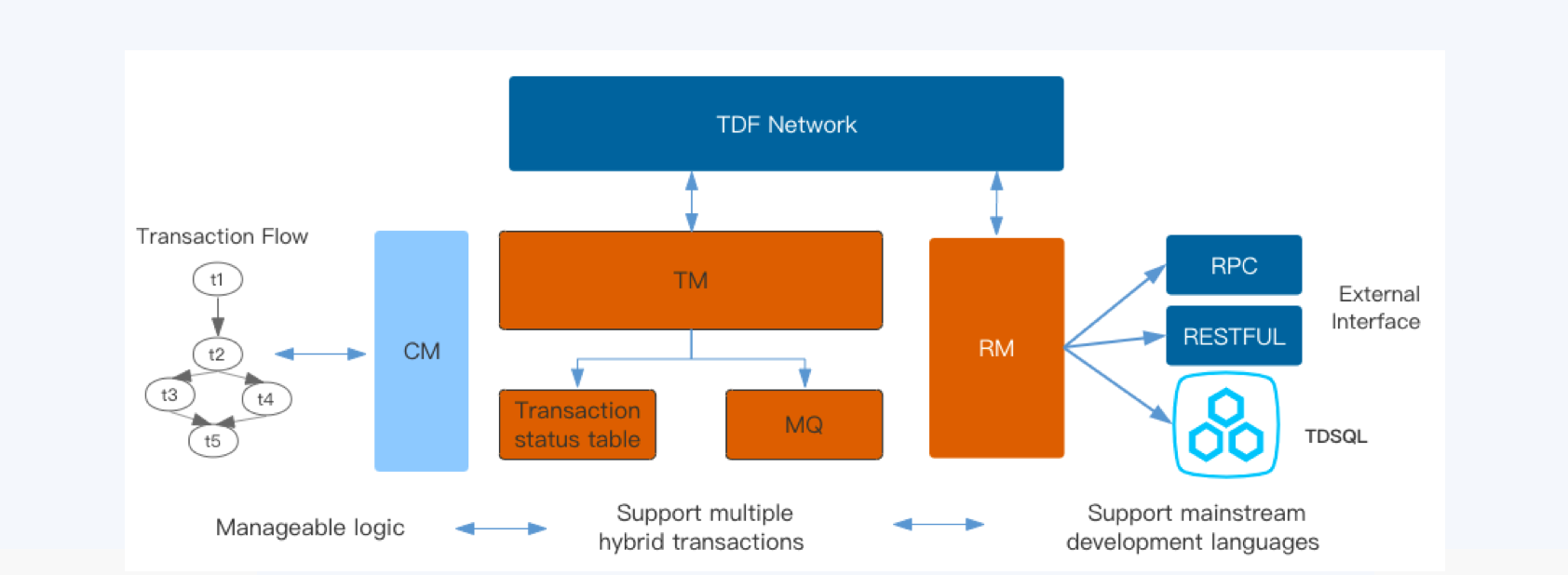

In Midas, the most critical challenge is to ensure the data consistency in transactions. We have developed a distributed transaction engine TDXA to handle the challenge. TDXA is a distributed transaction framework, which is designed to solve the consistency problem in application layer. The architecture of TDXA is as follows.

The main components are described as follows.

- TM: A distributed transaction manager. As the control center of TDXA, it deploys a decentralized approach to offer services of high availability. TM supports both REST API based Try-Confirm/Cancel (TCC) and hybrid DB transactions. With TDF (an asynchronous coroutine framework) and asynchronous transaction processing in TDSQL, TM is able to support the billing business of the whole company in a high efficient way.

- CM: The configuration center of TDXA. CM provides a flexible mechanism to register, manage and update transaction processing flow at runtime. It automatically checks the correctness and completeness of the transaction flow, and visualize it in a GUI console for users. Users can manage the flow in the GUI console.

- TDSQL: A distributed transactional database, with characteristics of strong consistency, high availability, global deployment architecture, distributed horizontal scalability, high performance, enterprise-grade security support and so on. TDSQL provides a comprehensive distributed database solution.

- MQ: A highly consistent and available message queue is required to enable TDXA to handle various failures during processing transactions.

As you can see, a highly consistent and available message queue plays a mission critical role in processing transactions for our billing service.

Message queue in billing service

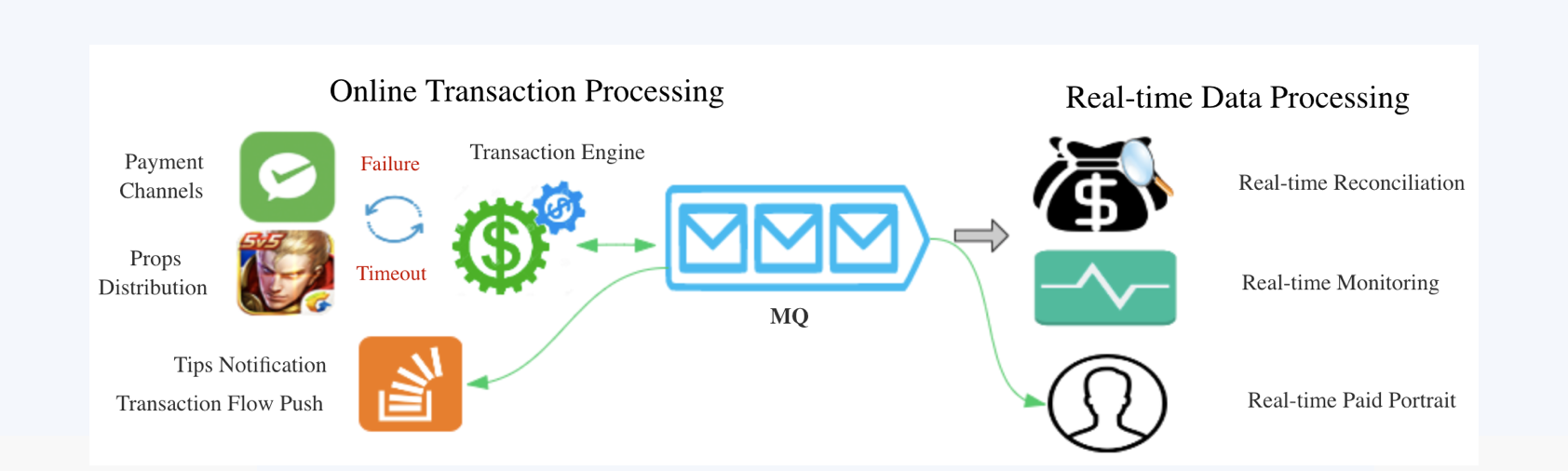

The usage of a message queue in our billing service can be divided into two categories: online transaction processing, and real-time data processing.

Online transaction processing

There are more than 80 channels with various characteristics, and more than 300 different business processing logic within Midas. One single payment workflow often involves many internal and external systems. This leads to longer RPC chains, and more failures, especially network timeouts (e.g. when interacting with oversea payment services).

TDXA leverages a message queue for handling failures occurred in processing transactions in a reliable way. Integrating with a local transaction state, TDXA is able to resume the transaction process from failures and ensure the consistency of billions of transactions daily.

Real-time data processing

The second challenge in a billing platform is, how to prove the data consistency of the transactions? We verify it by using a reconciliation system. The shorter the reconciliation time is, the sooner the problem is detected. For mobile payments, real-time user experience is critical. For example, if the hero is not delivered in time after purchasing in the King of Glory game, it will inevitably affect user experience, and thus resulting in complaints.

We reconcile the billing transactions in real time by using a stream computing engine to process the transactions produced in the message queue.

TDXA leverages the message queue in both online transaction processing and real-time data processing to ensure the effectiveness and consistency of a transaction.

Other scenarios

During peek (for example, in a King of Globry anniversary celeration event), the transaction traffic of Midas can burst to more than 10 times of average. The message queue is able to buffer such peek traffic to reduce the pressure of core transaction system for requests such as transaction inquiries, delivery and tips notification.

Also, with the ability to process messages in a message queue in real-time, we are able to offer real-time data analysis and provide precise marketing services for our customers.

Why Apache Pulsar

The requirements of a distributed message queue for our billing platform are summarized as follows:

- Strong consistency: A billing service bears no data loss, which is the basic requirement.

- High availability: It must have the failover capability and can perform the automatic recovery on failures.

- Massive storage: Mobile applications generates a large amount of transaction data, so massive storage capacity is required.

- Low latency: A payment service handling billions of transactions requires receiving messages in predictable low latency (less than 10ms).

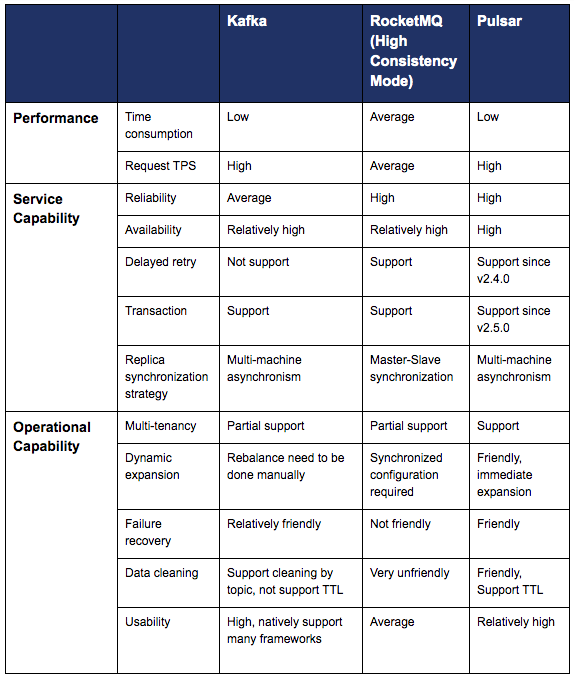

We have evaluated many open source solutions for Midas. Kafka is popular in log collection and processing, however it is rarely used in mission-critical financial use cases due to its problems of data consistency and durability. RocketMQ doesn't provide a user-friendly API in administrating topics (e.g. you cannot delete invalid messages on per-topic basis), and it doesn't provide failover capability in its open source version. We evaluated and chose Pulsar because of its native high consistency. Apache Pulsar provides storage services of high availability based on Apache Bookkeeper, and deploys a decoupled architecture, so the storage and processing layers can scale independently. Pulsar also supports several consumption modes and geo replication.

The following is a summary of our comparison of Kafka, RocketMQ and Pulsar.

Adopting Pulsar

In the process of adopting Pulsar in Tencent, we made changes to Pulsar in order to meet our requirements. The changes are summarized as follows.

- Support delaying messages and delayed retries (supported in version 2.4.0).

- Support secondary tag.

- Improve the management console, support message query and consumption tracking.

- Improve monitoring and alerting system.

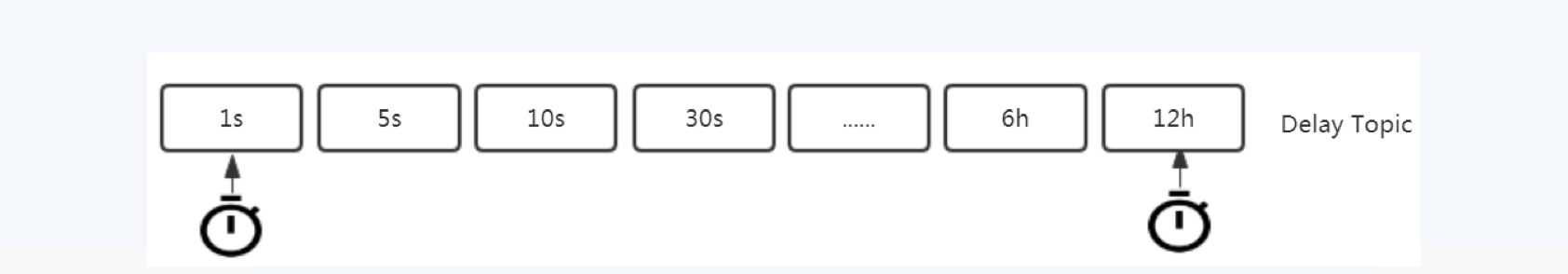

Delayed message delivery

Delayed message delivery is a common requirement in billing service. For example, it can be used for handling timeout in transaction processing. Concerning service failure or timeout, there is no need to retry a transaction many times in a short period, because it is likely to fail again. It makes more sense to retry in a backoff manner by leveraging delayed message delivery in Pulsar.

Delayed message delivery can be implemented in two different approaches. One is segragating messages into different topics based on the delay time interval, and broker checks those delay topics periodically based on their time interval and delivers the delayed messages accordingly.

This approach satisfies most requirements, except when you want to specify arbitrary delay. The second approach is implemented using a time wheel, which can support delayed delivery in seconds. However this approach has to maintain an index for the timewheel, which is not suitable for large volume of delayed messages.

While keeping Pulsar internal storage unchanged, we implemented the above two approaches to support bargaining activities for the King of Glory game.

Secondary tag

Midas has tens of thousands of businesses. To improve security, it is necessary to synchronize transaction flow according to business. If you create topic on per-business basis, you need to create tens of thousands of topics, which will increase the burden of topic management; if a consumer needs to consume messages from all the businesses involved in a transaction flow, Midas has to maintain tens of thousands of subscriptions.

Hence we introduced a Tag attribute in Pulsar message metadata. Users can set multiple tags in a message while producing it. When messages are consumed, the broker will filter out the desired tags.

Management Console

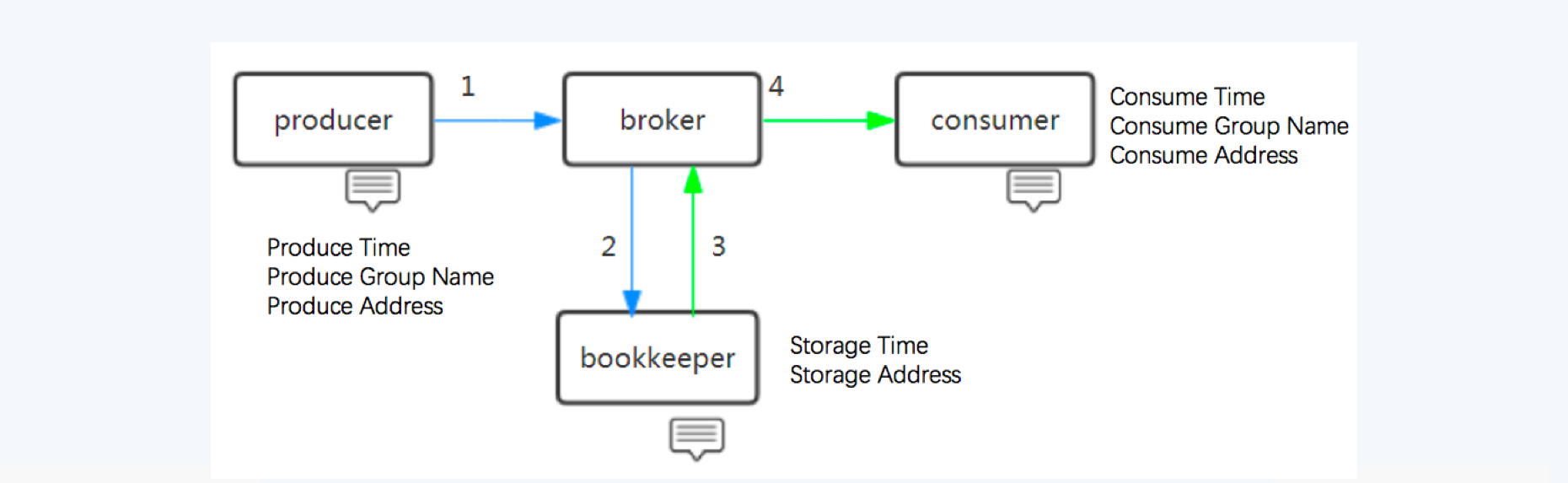

To use message queues at large scale requires a good management console. The management console should be able to serve the following requests from our users.

- What is the content of this message?

- Who is the producer of this message?

- Is this message consumed? Who is the consumer?

To serve these requests, we add the lifecycle related information to Pulsar message metadata. So we can track the entire life cycle of a message (from production to consumption).

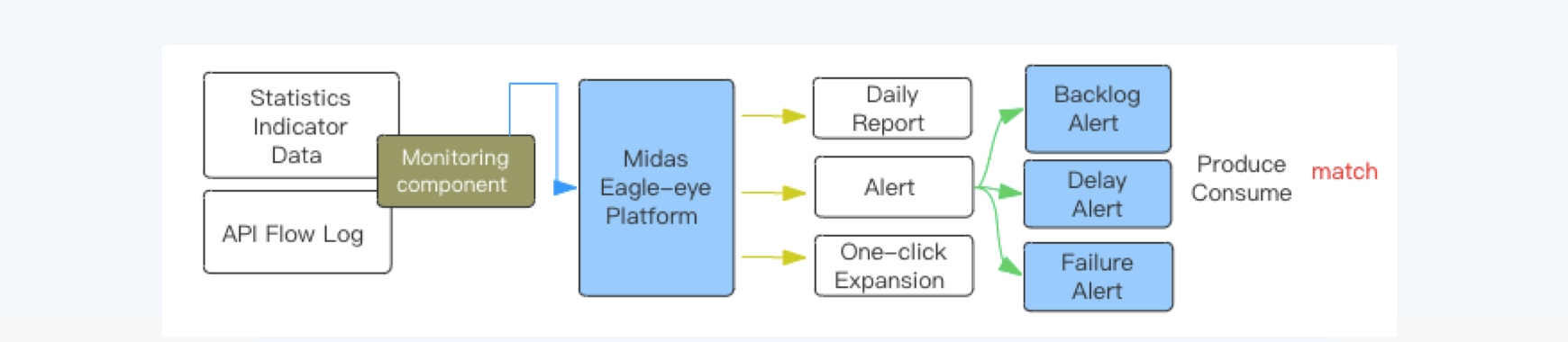

Monitor and alert

We collect the metrics from Pulsar and store them in our Eagle-Eye ops platform. Thus, we can write alert rules to monitor the system.

We monitor and alert on the following metrics:

- Backlog: If massive information accumulates for online services, it means that consumption has become a bottleneck. At this time, it is necessary to give a timely alert and inform the relevant personnel to deal with it.

- End-to-end latency: In the transaction record query scenario, the purchase record is required to be searched within a second. By matching the production flow and consumption flow collected by the monitoring component, we can count the end-to-end latency of each message.

- Failures: The Eagle-Eye ops platform makes statistics of errors in the pipeline, monitoring and alerting from various dimensions such as business, IP and others.

Pulsar in Midas

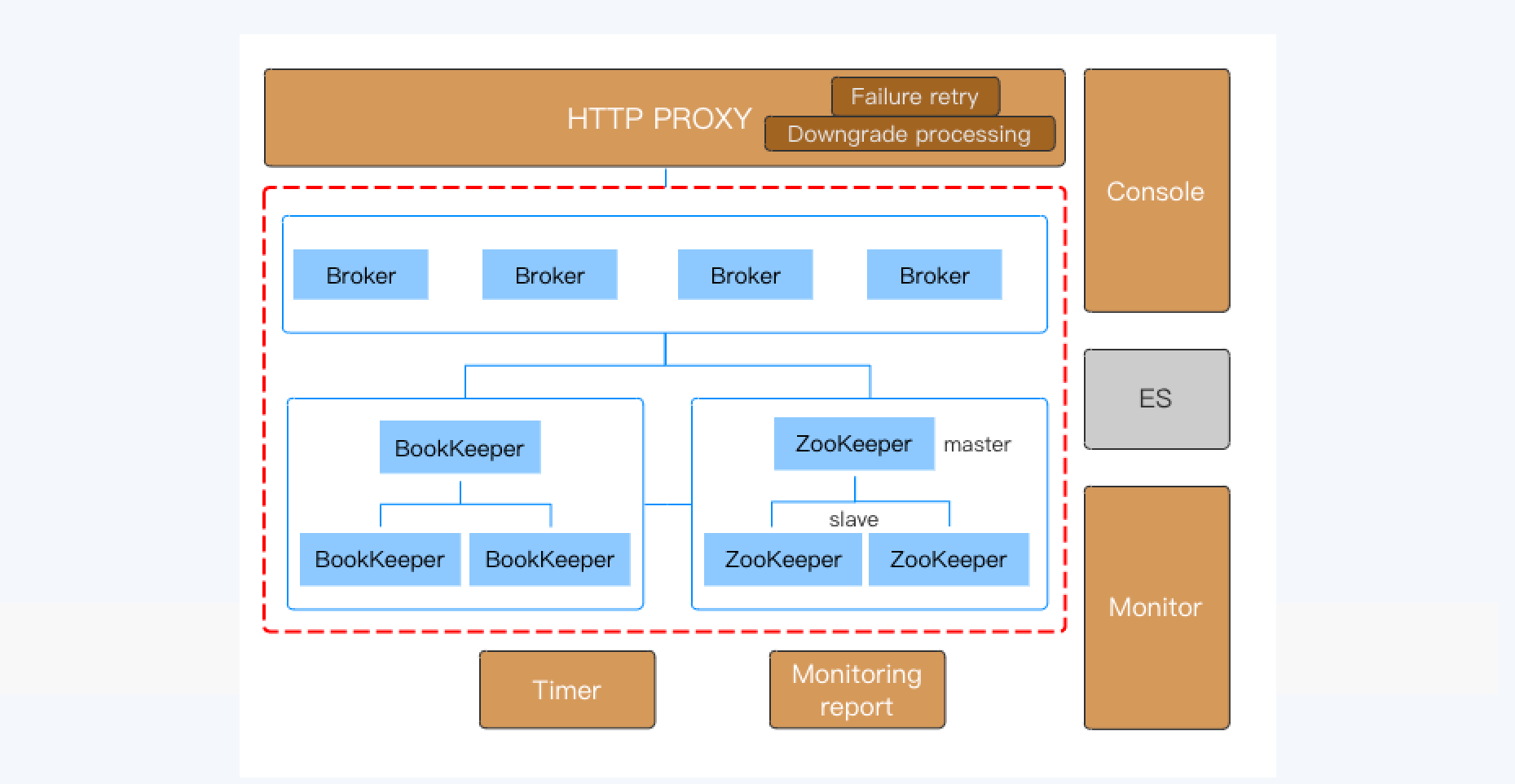

With the enhancements we made in Pulsar, we deployed Pulsar in the following architecture.

- As the message queue proxy layer, Broker is responsible for message production and consumption requests. Broker supports horizontal scalability, and rebalances automatically by topic according to the load.

- BookKeeper serves as the distributed storage for message queues. You can configure multiple replicas of messages in BookKeeper. BookKeeper is enabled with failover capability under exceptional circumstances.

- ZooKeeper serves as the metadata and cluster configuration center for message queues.

- Some Midas businesses are written in JS and PHP. The HTTP proxy provides the unified access endpoint and retry capability for clients using other languages. When the production cluster fails, the proxy will degrade and route messages to the other clusters for disaster recovery.

- Pulsar supports various consumption modes. Shared subscription allows scaling up the consumption beyond the number of partitions, and Failover subscription works well for stream processing in transaction cleanup workflow.

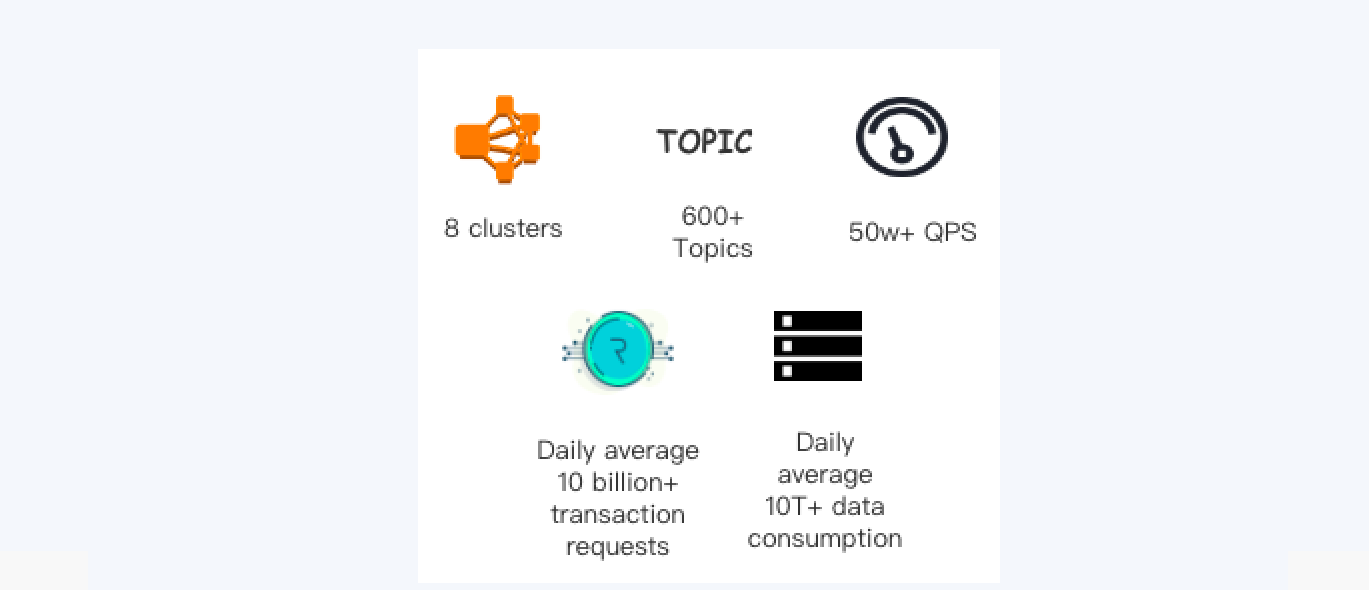

We have successfully adopted and run Pulsar at a very large scale. It handles tens of billions of transactions during peak time, guarantees data consistency in processing transactions, and provides 99.999% high availability for our services. The high consistency, availability and stability of Pulsar helps our billing and transaction engine run very efficiently.

Summary

Apache Pulsar is a young open source project with attractive features. Apache Pulsar community is growing fast with new adoptions in different industries. We’d like to develop further collaborations with Apache Pulsar community, contribute our improvements back to the community, and work with other users to further improve Pulsar.