In this article, we’re going to take an in-depth look at data placement policies in Apache Pulsar. But before that, we need to first understand isolation policies in Pulsar. Data placement policies can help us isolate data in Pulsar and achieve different levels of disaster tolerance.

Isolation policies in Apache Pulsar

For a Pulsar cluster, a Pulsar instance provides services to multiple teams. When organizing resources across multiple teams, you want to make a suitable isolation plan to avoid resource competition between different teams and applications, thus providing high-quality messaging services. In this case, you need to take resource isolation into consideration and weigh your intended actions against expected and unexpected consequences.

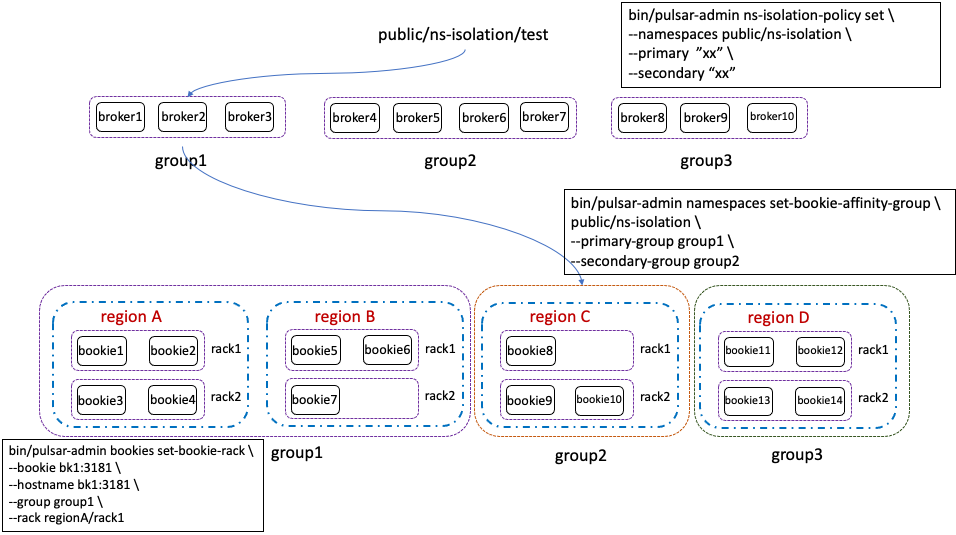

Pulsar supports isolation at both the broker level and BookKeeper level. As shown in the image below, for broker level isolation, you can divide brokers into different groups and assign different groups to each namespace. In this way, we can bind topics in the namespace to a set of brokers that belong to specific groups. For detailed information, refer to Pulsar Isolation Part IV: Single Cluster Isolation.

Pulsar brokers not only provide message services, but also offer message storage isolation with the help of BookKeeper clients. For message services, we can bind topics to a set of brokers to own them. For message storage isolation, we need to configure BookKeeper data placement policies for BookKeeper clients.

Because this is a large topic, in this article we will mainly focus on BookKeeper’s data placement policy and provide guidance on how to configure these policies with pulsar-admin commands.

Bookie data isolation level

Bookie data isolation is controlled by the bookie client. For Pulsar, there are two kinds of bookie clients to read and write data. One is on the broker side. Pulsar brokers use bookie clients to read and write topic messages. The other one is on the bookie autoRecovery side. The bookie auditor will check whether ledger replicas fulfill the expected placement policy and the bookie replication worker will write ledger replicas to target bookies according to the configured placement policy.

To enable a placement policy for Pulsar, we should configure it both on the Pulsar broker and BookKeeper auto recovery side. To do so, we can simply use the bin/pulsar-admin bookies set-bookie-rack command. This command will write the placement policy into ZooKeeper, and both bookie clients on the broker and auto recovery side will read the placement policy from ZooKeeper and apply it.

BookKeeper provides two placement policies:

- RackawareEnsemblePlacementPolicy

- RegionAwareEnsemblePlacementPolicy

You can use RackawareEnsemblePlacementPolicy and RegionAwareEnsemblePlacementPolicy in all kinds of deployments where the rack is a subset of a region (the former is included in the latter).

Now let’s take a deeper dive into how RackawareEnsemblePlacementPolicy and RegionAwareEnsemblePlacementPolicy work.

Achieving rack-level disaster tolerance

RackAwareEnsemblePlacementPolicy is a policy that forces different data replicas to be placed in different racks to guarantee data rack-level disaster tolerance. In a data center, we usually have a lot of racks, and each rack has many storage nodes. In production, we need to place different data replicas into different racks to tolerate rack-level failure. We can configure rack information for each bookie node, and RackAwareEnsemblePlacementPolicy can help us avoid rack-level failure.

If you use the RackawareEnsemblePlacementPolicy, you should configure bookie instances with their own rack. The related commands are as follows:

bin/pulsar-admin bookies set-bookie-rack --bookie bookie1:3181 --hostname bookie1.pulsar.com:3181 --group group1 --rack /rack1

bin/pulsar-admin bookies set-bookie-rack --bookie bookie2:3181 --hostname bookie2.pulsar.com:3181 --group group1 --rack /rack1

bin/pulsar-admin bookies set-bookie-rack --bookie bookie3:3181 --hostname bookie3.pulsar.com:3181 --group group1 --rack /rack1

bin/pulsar-admin bookies set-bookie-rack --bookie bookie4:3181 --hostname bookie4.pulsar.com:3181 --group group1 --rack /rack1

bin/pulsar-admin bookies set-bookie-rack --bookie bookie5:3181 --hostname bookie5.pulsar.com:3181 --group group1 --rack /rack2

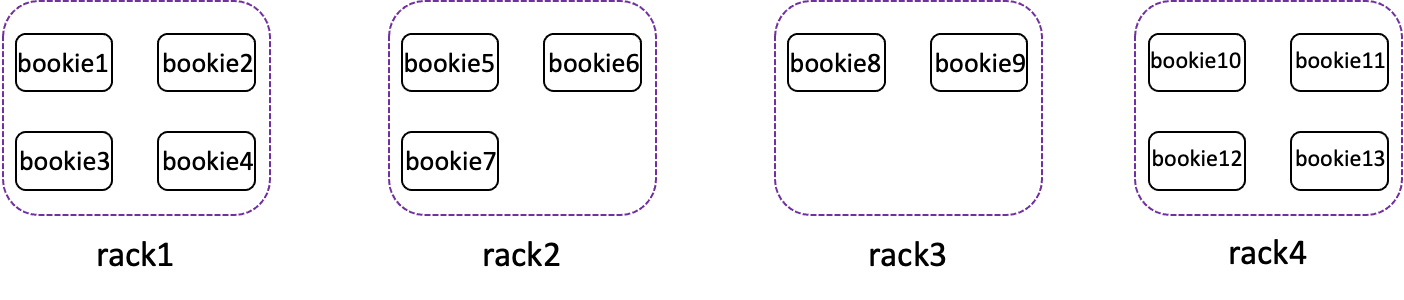

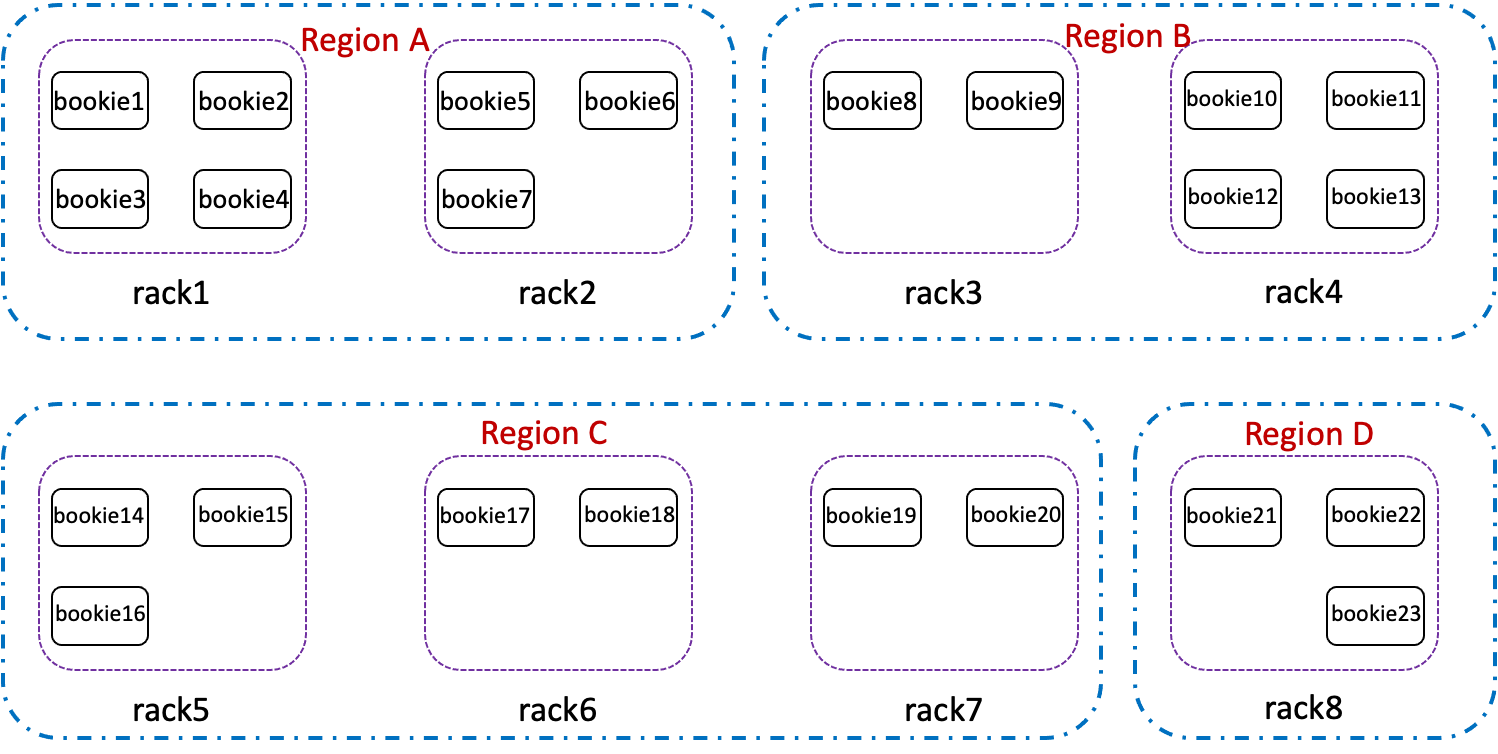

In Figure 2, the BookKeeper cluster has 4 racks and 13 bookie instances. If a topic is configured with EnsembleSize = 3, WriteQuorum=3, AckQuorum=2, the bookie client will choose 3 racks from the 4 total racks, such as rack 1, rack 3, and rack 4. For each rack, it will choose 1 bookie instance to write, such as bookie 1, bookie 8, and bookie 12.

If all of the bookie instances in rack 3 and rack 4 have failed and the 3 rack requirements cannot be met, the client will choose bookies for new ledger creation and old ledger recovery based on the EnforceMinNumRacksPerWriteQuorum and MinNumRacksPerWriteQuorum=3 field.

- If you set EnforceMinNumRacksPerWriteQuorum=true and MinNumRacksPerWriteQuorum=3, the bookie client will fail to choose bookies to write to and throw a BKNotEnoughBookiesException because there are only 2 racks available and MinNumRacksPerWriteQuorum=3 is not fulfilled. This means that the new ledger cannot be created and that the old ledger cannot be recovered.

- If you set EnforceMinNumRacksPerWriteQuorum=true and MinNumRacksPerWriteQuorum=2, for ledger recovery, for example, the old ledger’s ensemble is <bookie1, bookie8, bookie12>. The bookie client will choose 2 bookies from rack1 and rack2, such as bookie2 and bookie7, to place 2 replicas. For new ledger creation, the bookie client will choose 2 bookies from rack1 and rack2, such as bookie1 and bookie6, and for the last replica, it will randomly choose one bookie to place.

- If you set EnforceMinNumRacksPerWriteQuorum=false, for ledger recovery, for example, the old ledger’s ensemble is <bookie1, bookie8 and bookie12>. The bookie client will choose 2 bookies from rack1 and rack2, such as bookie2 and bookie7, to place 2 replicas. For new ledger creation, the bookie client will choose 2 bookies from rack1 and rack2, such as bookie2 and bookie5, and for the last replica, it will randomly choose one bookie to place.

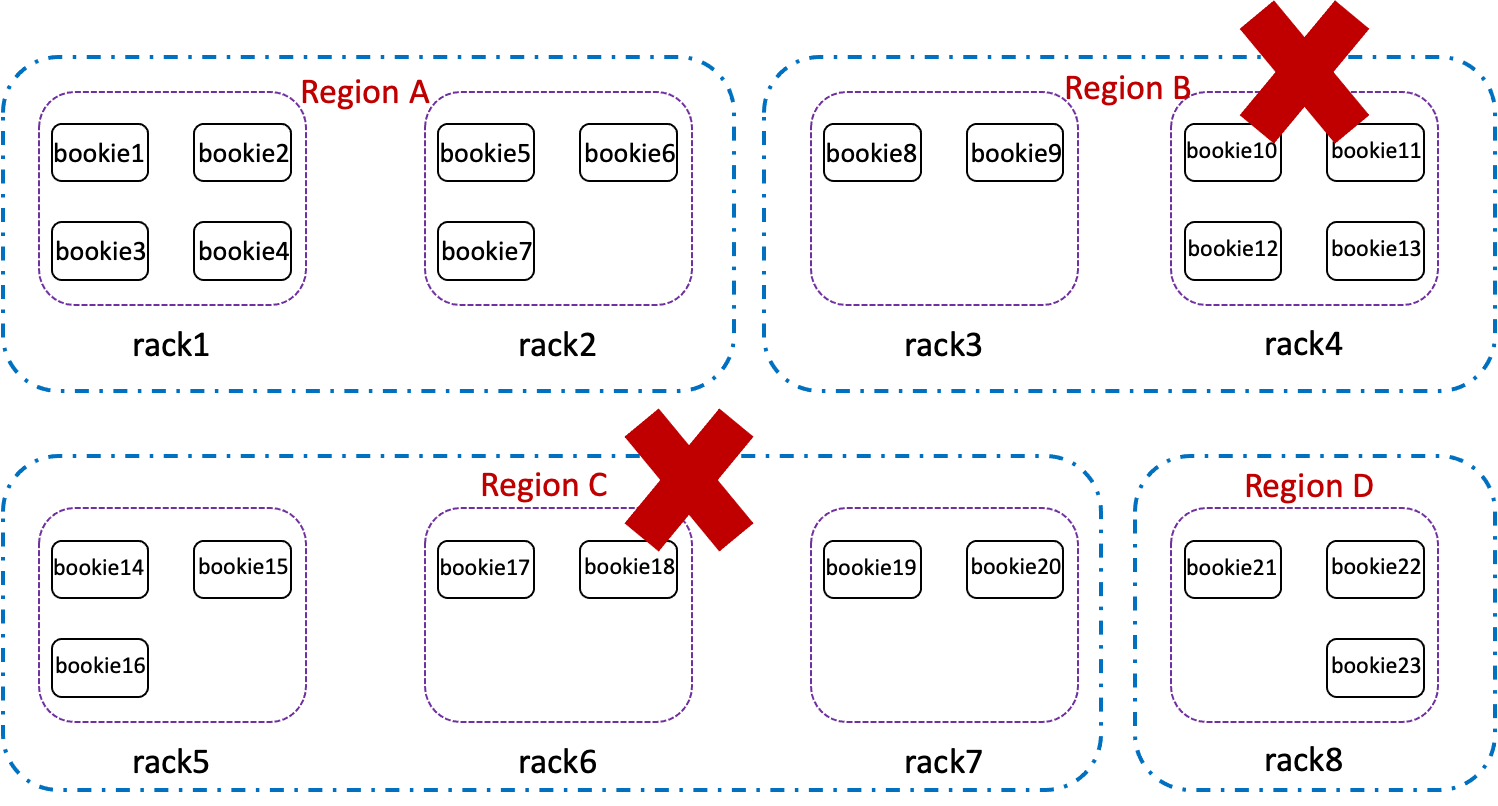

If 2 regions fail as shown in Figure 5 (For ledger recovery, for example, the old ledger’s ensemble is <bookie5, bookie17, bookie21>), the bookie client will choose one bookie from Region A or Region D to replace the failed bookie17. For new ledger creation, the bookie client will choose Region A and Region D to write replicas. In Region A, it will fall back to RackawareEnsemblePlacementPolicy and choose 2 bookie instances from rack1 and rack2. For Region D, it will choose one bookie instance from rack8. In the end, it may choose bookie1, bookie6, and bookie23 to write ledger replicas.

Figure 5

Figure 5

How data placement policies work

The bookie isolation group makes use of the existing BookKeeper rack-aware placement policy. The “rack” concept can be anything (e.g. rack/region/availability zone). In this example, we use the bin/pulsar-admin bookies set-bookie-rack command to configure the isolation policy.

bin/pulsar-admin bookies set-bookie-rack

The following options are required: [-b | --bookie], [-r | --rack]

Then we need to update the rack placement information for a specific bookie in the cluster. Note that the bookie address format is `address:port`.

Usage: set-bookie-rack [options]

Options:

* -b, --bookie

Bookie address (format: `address:port`)

-g, --group

Bookie group name

Default: default

--hostname

Bookie host name

* -r, --rack

Bookie rack name

In this command, we can specify the rack name and group name for each bookie. The rack name is used to represent which region or rack this bookie belongs to. You can assign the group name to a specific namespace to achieve namespace-level isolation.

The bin/pulsar-admin bookies set-bookie-rack command writes the configured placement policy into ZooKeeper, and the bookie clients will get the placement policy from ZooKeeper and apply it when choosing ledger ensembles.

The basic idea of the rack-aware placement policy is this: when the ensemble for a ledger is formed or replicated, the client picks bookies from different racks to reduce the possibility of data unavailability. If bookies from different racks are not available, the policy falls back to choosing randomly across available bookies.

In contrast to the rack-aware policy, the basic idea of the region-aware placement policy is when the ensemble for a ledger is formed or replicated, the client picks bookies from different regions, and for the selected region, it will pick bookies from different racks if more than one ensemble falls into the same region.

Another tip for choosing ensembles is to take current disk usage weight into consideration. When the BookKeeper cluster runs for a long time, you may notice that different bookies’ ledger disk usage is unbalanced. You can enable disk weight by setting DiskWeightBasedPlacementEnabled=true in conf/broker.conf. After enabling disk weight, the bookie client will take the disk usage into consideration when choosing ensembles for the ledger.

Pulsar and BookKeeper’s isolation policy: working together for namespace isolation

As shown in Figure 1 above, we need to configure three parts if we want to enable the isolation policy for both Pulsar brokers and BookKeeper.

For detailed operations, refer to Pulsar Isolation Part IV: Single Cluster Isolation.

How to configure the placement policy

If you want to enable the placement policy, you need to enable it both on the broker and bookie auto recovery side. The following is an example of enabling the region-aware placement policy.

Enable the policy on the broker side

In conf/broker.conf, configure the following field:

bookkeeperClientRegionawarePolicyEnabled=true

To enable MinNumRacksPerWriteQuorum, configure the following fields:

bookkeeperClientMinNumRacksPerWriteQuorum=2

bookkeeperClientEnforceMinNumRacksPerWriteQuorum=true

To enable disk weight placement, configure the following field:

bookkeeperDiskWeightBasedPlacementEnabled=true

Enable the policy on the bookie auto recovery side

In conf/bookkeeper.conf, configure the following fields:

ensemblePlacementPolicy=org.apache.bookkeeper.client.RegionAwareEnsemblePlacementPolicy

reppDnsResolverClass=org.apache.pulsar.zookeeper.ZkBookieRackAffinityMapping

To enable MinNumRacksPerWriteQuorum, configure the following fields:

minNumRacksPerWriteQuorum=2

enforceMinNumRacksPerWriteQuorum=true

To enable disk weight placement, configure the following field:

diskWeightBasedPlacementEnabled=true

For broker and BookKeeper placement policy configuration, refer to the previous sections.

Summary

This article gives an overview of Pulsar isolation policies, both on the Pulsar broker and BookKeeper side. We also look at how Pulsar and BookKeeper’s isolation policy can work together for namespace isolation. For the BookKeeper isolation policy, we explain how the rack-aware placement policy and region-aware placement policy work respectively. These data placement policies provide the ability for different level disaster tolerance.

More on Apache Pulsar

Pulsar has become one of the most active Apache projects over the past few years, with a vibrant community that continues to drive innovation and improvements to the project.

- Start your on-demand Pulsar training today with StreamNative Academy.

- Interested in fully-managed Apache Pulsar with enhanced reliability, tools, and features? Contact us now!

- Read the 2022 Pulsar vs. Kafka Benchmark Report for a side-by-side comparison of Pulsar and Kafka performance, including tests on throughput, latency, and more.

- Watch sessions from Pulsar Summit San Francisco 2022 for best practices and the future of messaging and event streaming technologies.