Introduction

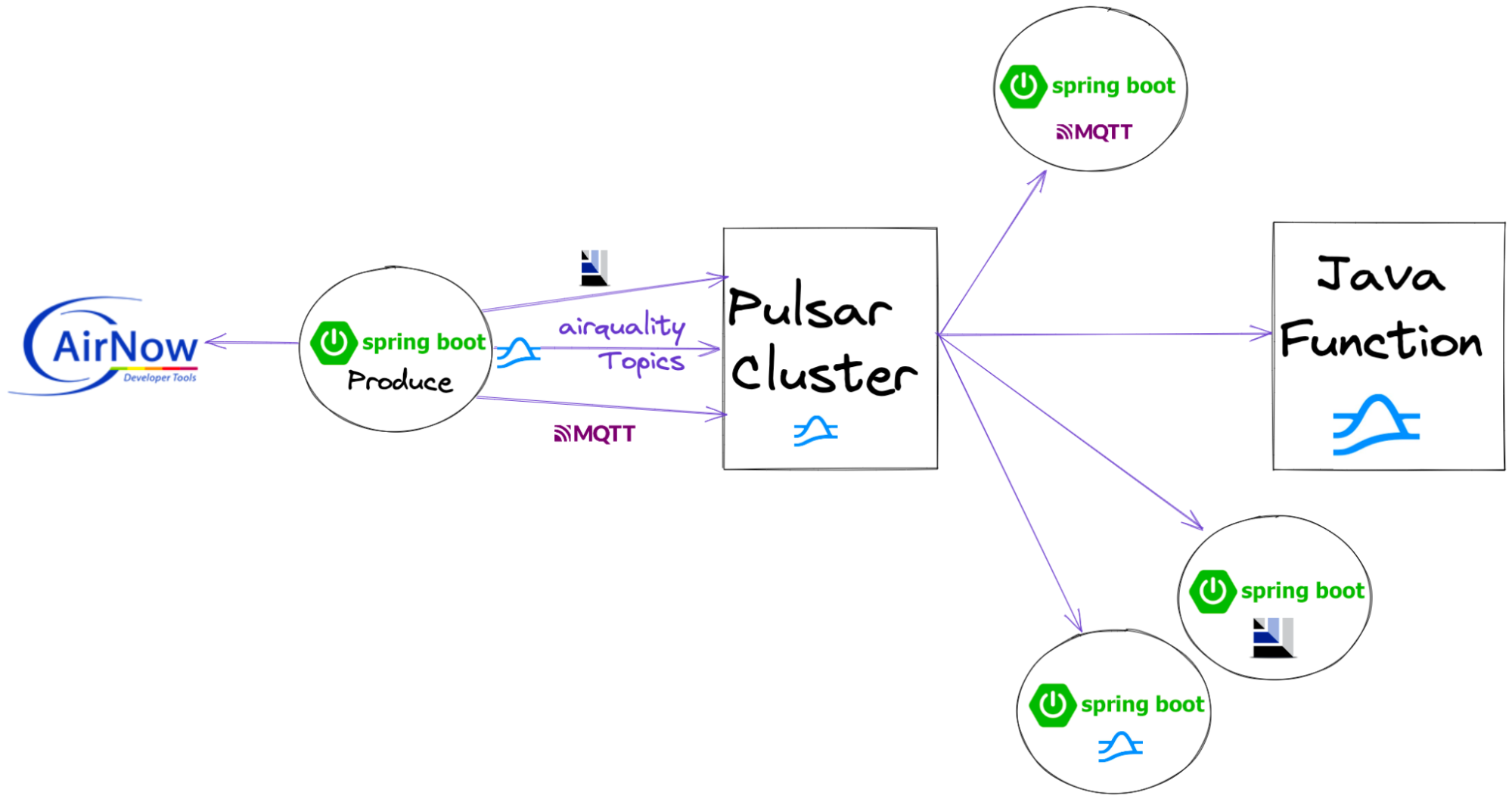

This is the second part of our on-going blog series Spring into Pulsar. In the first blog, I showed you how easy it is to build a Spring application that communicates with Pulsar using it’s native protocol. In this second blog, I will build the same application with other messaging protocols (MQTT, AMQP/RabbitMQ, and Kafka) that Pulsar supports to show off its flexibility. You will see how easy it is to improve the performance of your existing applications with little code change. You can see this video for details.

In this blog, I used other popular messaging protocols to build the same Spring application with little code change. As we can see, Spring apps for MoPs, AoPs and KoPs allow you to easily leverage legacy protocols for uplifting many applications to hybrid clouds.

Source Code

- https://github.com/tspannhw/airquality

- https://github.com/tspannhw/pulsar-airquality-function

- https://github.com/tspannhw/airquality-mqtt-consumer

- https://github.com/tspannhw/airquality-amqp-consumer

- https://github.com/tspannhw/airquality-kafka-consumer

- https://github.com/tspannhw/airquality-consumer

- https://github.com/tspannhw/FLiPN-AirQuality-Checks

- https://github.com/tspannhw/FLiPN-AirQuality-REST

- https://github.com/david-streamlio/multi-protocol-pulsar

Resources

- https://www.flipstack.dev/

- https://github.com/majusko/pulsar-java-spring-boot-starter

- https://github.com/datastax/reactive-pulsar

- https://pulsar.apache.org/docs/en/client-libraries-java/

- https://dzone.com/articles/simple-apache-nifi-operations-dashboard-part-2-spr

- https://spring.io/blog/2015/01/30/why-12-factor-application-patterns-microservices-and-cloudfoundry-matter

- https://www.slideshare.net/bunkertor/brownbag001-spring-ioc-from-2012

- https://pulsar.apache.org/docs/en/client-libraries-java/

- /blog/engineering/2022-04-07-pulsar-vs-kafka-benchmark/

- /blog/engineering/2022-04-14-what-the-flip-is-the-flip-stack/

- /blog/release/2022-03-07-failure-is-not-an-option-its-a-given/

- /blog/engineering/2022-03-10-apache-pulsar-client-application-best-practices/

- /blog/engineering/2021-12-14-developing-event-driven-microservices-with-apache-pulsar/

More on Apache Pulsar

- Learn the Pulsar Fundamentals: While this blog did not cover the Pulsar fundamentals, there are great resources available to help you learn more. If you are new to Pulsar, we recommend you to take the self-paced Pulsar courses or instructor-led Pulsar training developed by some of the original creators of Pulsar. This will get you started with Pulsar and accelerate your streaming immediately.

- Spin up a Pulsar Cluster in Minutes: If you want to try building microservices without having to set up a Pulsar cluster yourself, sign up for StreamNative Cloud today. StreamNative Cloud is a simple, fast, and cost-effective way to run Pulsar in the public cloud.

- 3-Part Webinar Series Building Event-Driven Microservices with Apache Pulsar. Watch the webinars here and find the source code from the webinars here.

- Doc How to develop Pulsar Functions

- Blog Function Mesh - Simplify Complex Streaming Jobs in Cloud